Mosaic AI Framework

A Databricks session on Mosaic AI patterns for building, evaluating and deploying production-quality AI applications.

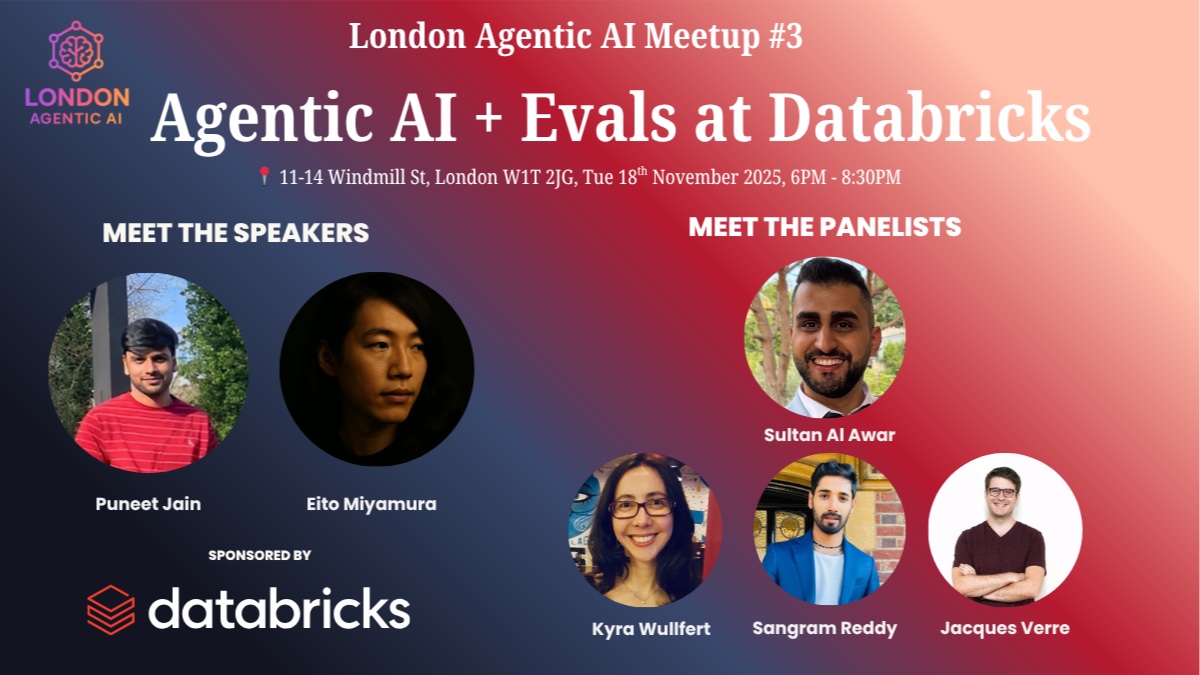

A London Agentic AI and Agentic AI London meetup at Databricks focused on agent evaluation, Mosaic AI, DSPy, context engineering and production-quality AI applications.

Hosts, sponsors and speaker organisations represented at this London Agentic AI stop.

The Databricks event focused on how teams can evaluate and improve agentic AI systems before they become production risk. The theme connected Mosaic AI, DSPy, context engineering and practical evaluation strategies for production-grade AI applications.

The session gave London AI builders a venue to discuss how agent behaviour, retrieval quality, workflow reliability and deployment constraints can be measured with real evaluation loops.

A concise briefing on the technical context behind the event: why the topic mattered to the London Agentic AI community and what sponsors, speakers and attendees came to discuss.

The public Meetup page confirms the title, Databricks London HQ venue and event timing.

The archive content positions this event around evals, Mosaic AI, DSPy and context engineering.

The event video is linked from the London Agentic AI YouTube archive.

How to think about evaluation loops for production AI agents.

Where Databricks Mosaic AI fits in the development and evaluation lifecycle.

How DSPy and compiler-style thinking relate to context and prompt optimisation.

How to discuss agent reliability with data, ML and platform teams.

A Databricks session on Mosaic AI patterns for building, evaluating and deploying production-quality AI applications.

A context-engineering talk connecting DSPy ideas to compiler-style optimization and agent evaluation workflows.

A panel moderated by Eito Miyamura on how to evaluate agent quality, reliability and operational behaviour before deployment.

The video is embedded here for context and linked as a source so search engines, LLMs and attendees can connect the event page to the original recording.

Open on YouTube →Original registration, archive and recording links for this London Agentic AI event.

The UK's original, high-signal Agentic AI community for AI engineers, agent builders, researchers, founders and technical leaders building production agents.

Subscribe on Luma →